Source: View original notebook on GitHub

Category: Machine Learning / Learn ML

Closed Form Solution for Linear Regression

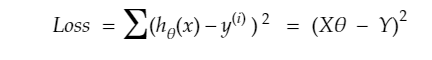

Loss function : -

- this is derived the same way as we solved for gradient descent or by method1 (formula) by equating the derivative of loss funciton to zero.(just in matrix notation)

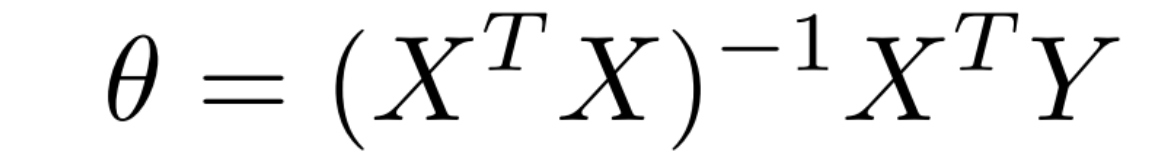

- solving further , theta vector results in

Derivation(Thank you Prateek Bhaiya)

- here X is (n_samples, n_features+1) containing X[:,0](first column) being all 1

Code

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

X = pd.read_csv('Datasets/linearX.csv',delimiter=',')

Y= pd.read_csv('Datasets/linearY.csv',delimiter=',')

# adding oth column with all ones

ones = np.ones(X.shape[0])

X = np.column_stack((ones,X))

plt.scatter(X[:,1],Y)

plt.show()

def hypo(X,theta):

return theta[0] + theta[1]*X

def closed_form_solution(X,Y):

first_part = np.linalg.pinv(X.T @ X)

second_part = X.T @ Y

return (first_part @ second_part).flatten()

# @ is used for matrix multiplication

theta = closed_form_solution(X,Y)

theta

Output:

array([9.90309198e-01, 7.85559346e-04])

intercept = theta[0]

slope = theta[1]

Y_pred = hypo(X[:,1],theta)

plt.scatter(X[:,1],Y,marker = '*')

plt.plot(X[:,1],Y_pred, 'r')

plt.show()

from sklearn.metrics import r2_score

score = r2_score(Y,Y_pred)

score

Output:

0.43818504557919835